Self-Defeat Is not a Problem for Agent-Relative Morality

At least not in the way you might think

It’s no secret that agent-relative normative theories are self-defeating under certain circumstances: When everyone successfully follow them, it makes everyone worse off. But while it is clear that they are self defeating, it is not quite as clear what to make of this fact.

Our evil robot overlords (consequentialists) want us to think that this is a death-sentence for these sorts of views. Among these utility-maximizing calculators, you will find r̶o̶b̶o̶t̶s̶ people like Richard Y Chappell and Bentham's Bulldog telling us to run screaming away from this realm of contradicting preferences. On the more tolerant end, you will find someone like Derek Parfit,1 who allows for some degree of self-defeat, though he ultimately thinks that it’s a problem for moral theories. Michael Huemer seems to have a sort of middle-position where he thinks that self-defeat really is a problem for normative theories, though he still holds to deontology. I want to push the tolerance a little further yet and say that it is actually not a problem for a moral theory if it’s self-defeating. This does not mean that agent-relative theories are completely let off the hook, though.

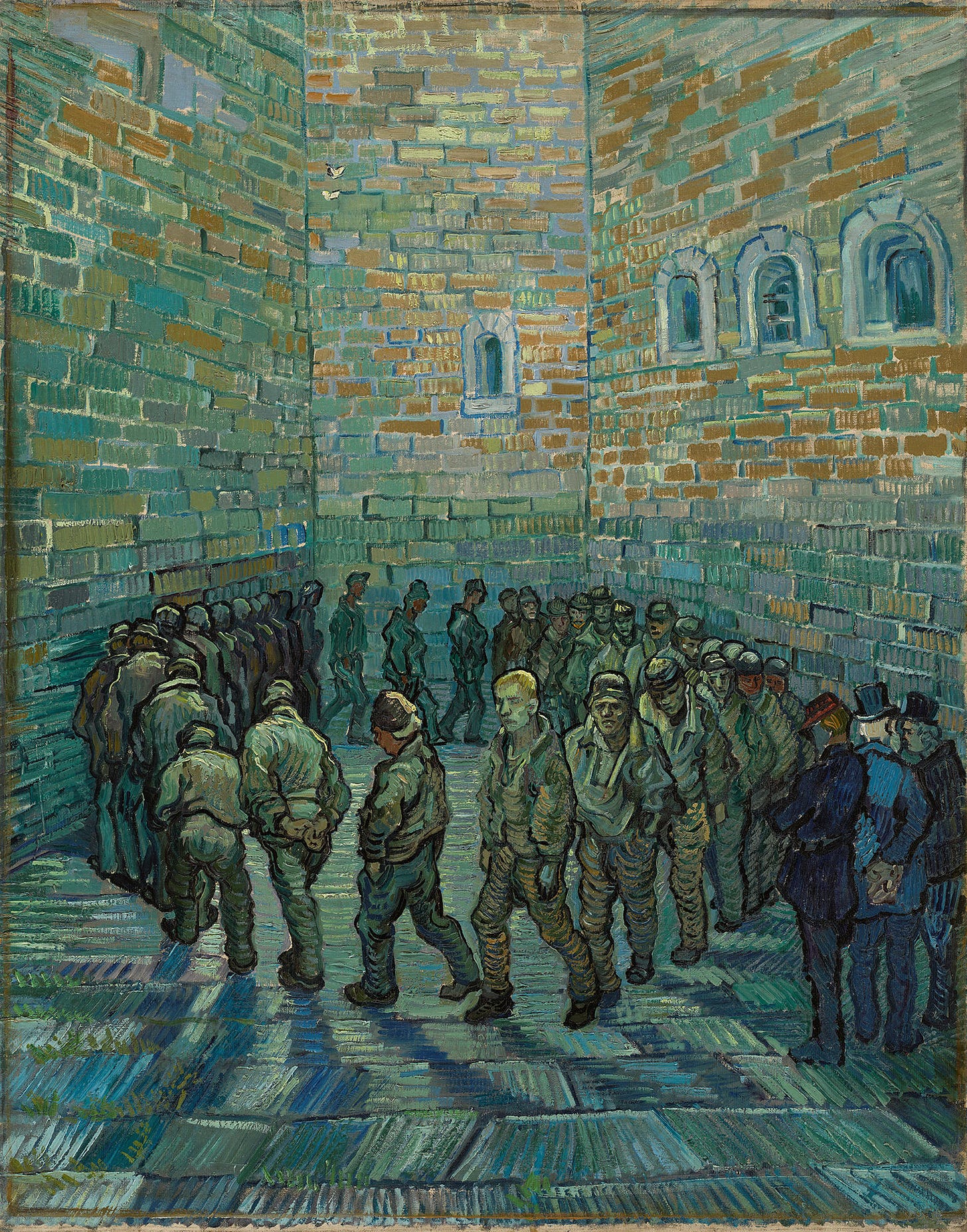

Prisoner’s Dilemmas

To start us off, we’ll revisit just why it is that agent-relative theories are self-defeating, doing so with the help of the standard prisoner’s dilemma:

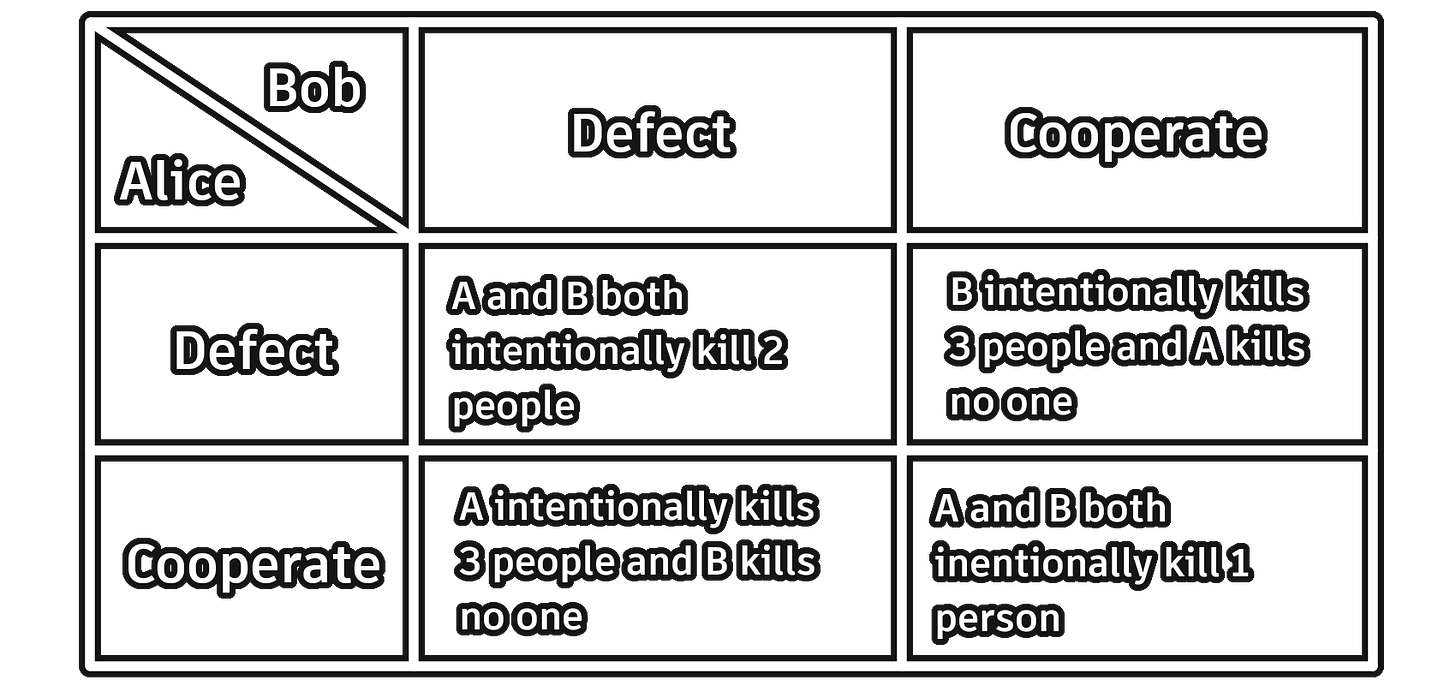

Alice and Bob have been very naughty! This is because they have been going on killing sprees throughout town together. Sadly, there are no witnesses on account that they have all been killed. Still, police suspect that Alice and Bob are behind the killings, so they examine both in separate rooms. They are each given two options: They can rat the other out (defect) or stay silent (cooperate). The possible outcomes are represented in this matrix:

The two are unable to communicate with each other, so they cannot conspire in any way. Assuming that both Alice and Bob are perfectly selfish (and don’t want to go to prison), what should they do? Well, it looks like each should choose to defect since doing so always makes them better off, regardless of what the other chooses. The thing is, both defecting has the worst outcome for the two in total, and each would have been better off if both had cooperated.

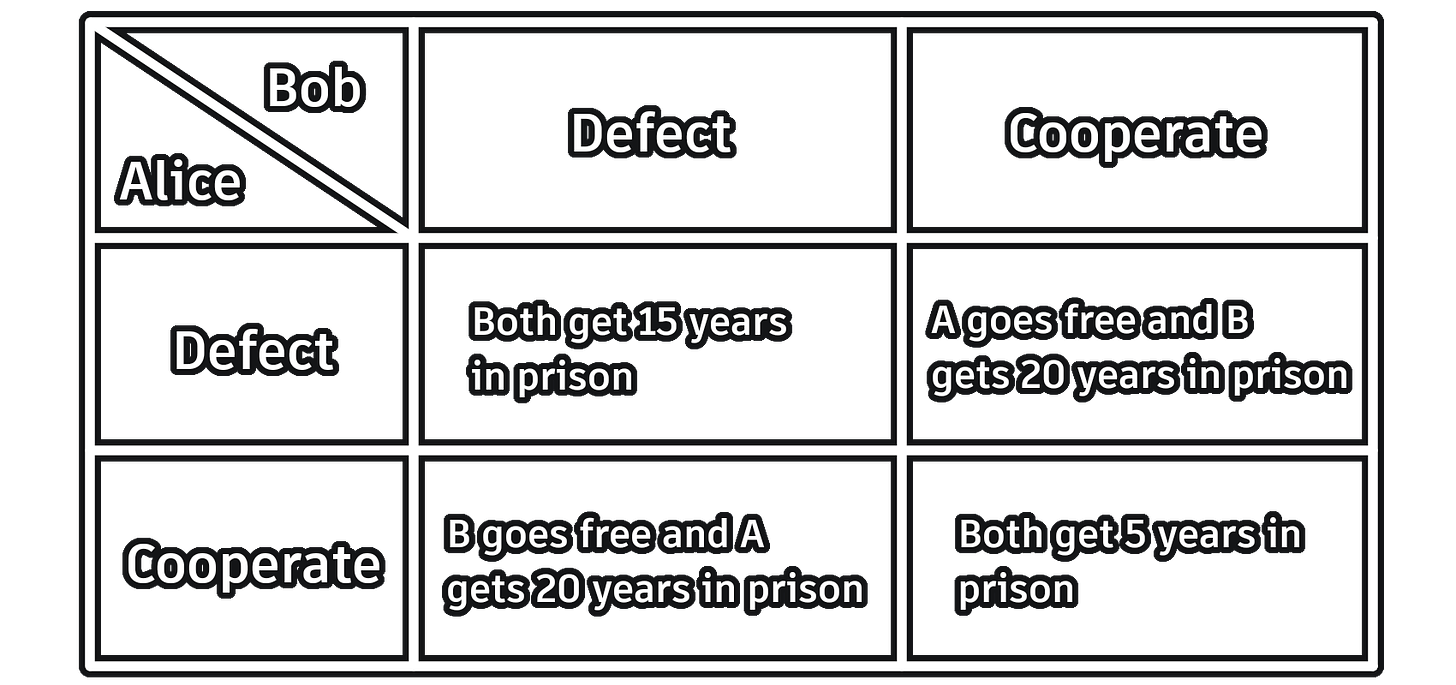

Self-interest theories are of course in good company here, as there are plenty of theories that are self-defeating in this sense. In fact any agent-relative theory of normativity will be self-defeating in this way (assuming it doesn’t give agents binary goals). We can easily show this by making a more abstract version of the prisoner’s dilemma:

Here the theory is T. Ta is Alice’s theory-given aims, and Tb is Bob’s theory-given aims. Theories that fall prey to this include (but are not limited to) theories that invoke special obligations, (agent-centered) deontological theories, and altruism (where you count your own welfare lower than other’s).

This of course raises the question of what we are to make of this. Is being self-defeating in this way a death-sentence for a theory? I think it probably isn’t. Let’s use the self-interest theory as an example. What is it that’s supposed to be the problem here? Well, the problem is that successfully following the theory makes the agents worse off. But this is not actually what is happening.

It may here make sense to highlight a distinction made by Parfit: Individual vs. collective direct self defeat. A theory is individually directly self-defeating, if successfully following the theory leads to your theory-given aims being worse achieved. Direct collective self-defeat, on the other hand, happens when everyone following the theory makes everyone’s theory-given aims worse achieved.

It looks like the first sort of self-defeat is impossible: Assume that you successfully follow T. This means that you optimally achieve your T-given aims. For the theory to be self-defeating, doing so must result in your T-given aims being suboptimally achieved. But this contradicts the stipulation that you did act optimally, meaning it is impossible. In other words: It is impossible for you to achieve your aims suboptimally by achieving them optimally.

To make this more intuitive, we can imagine holding all choices of everyone but a single agent A fixed. No matter what the state S of the world is, A will always be better off by successfully following the self-interest theory (that’s just what it means to follow the theory!) But if we then let other people make choices again, nothing changes: any choice anyone makes puts the world into a new state, but since S from above could be any state of the world, this includes all states brought about by other people. So in the prisoner’s dilemma, A is in no way failing to achieve her theory-given aims; it just happens that B is being annoying and screwing her over.

Direct collective self-defeat is very much possible though, and it is what is happening in the prisoner’s dilemma: both parties are successfully following the self-interest theory, but both parties’ self-interest-given aims are being worse achieved for it. And so there is some sort of self-defeat happening.

Moving from the Individual to the Collective Level

But it doesn’t really look like this is much of a problem for the self-interest theory. Who cares if everyone is being made worse off? I am as well off as I can, and that’s all I care about—other people can sail their own ship. If we were able to communicate, we should of course try to work out some sort of agreement, since this would make us all (including me) better off. But since no communication is allowed, I am just making the most of it for myself.

There is a crucial difference between self-interest theories and agent-relative moral theories though: Self-interest theories have a very narrow scope—namely you—and the only goal of the theory, from your perspective, is that you follow it. But moral theories seem to have a wider scope. If I am a deontologist, it’s not just that I want myself to be a deontologist—rather, it has all moral agents as its scope, and it is supposed to be a guide to right action for everyone. If I believe a moral theory then it seems that I implicitly think that everyone ought to abide by it. So it doesn’t look too good for collectively self-defeating moral theories: who cares whether everyone’s moral aims are being worse achieved, so long as I achieve my aims the best I can? Well, I sure hope you do, if you are really espousing a moral theory.

I think this sort of self-defeat is especially bad for what we may call agent-relative consequentialist moralities. The goal of these sorts of theories is to promote the good—irrespective of means—but the good varies from person to person. Still, the theory is a moral one, meant to be collective in scope. One such theory is what we can call special-obligations utilitarianism. On this theory, it is better for each person to promote the good of those close to them than to promote the good of those far from them, but the type of action and the intentions are irrelevant. This theory is obviously vulnerable to prisoner’s dilemmas (imagine that it is A’s and B’s mothers who will get prison-time instead). Here, the self-defeat seems especially vicious: Your goal is to promote the good, but by everyone promoting the good, the good is worse promoted. By everyone picking the best action, the worst outcome is achieved. But what makes an action good is simply that it leads to the best outcome! So the theory can truly be said to undermine itself.

The reason for this being especially bad lies, I think, in the theory in some very vague sense walking the line between being agent-relative and being agent-neutral. While what is good varies from person to person, it is irrelevant who (or what) brings it about. It doesn’t matter whether you, I, or a rock kills my mother—it is equally bad and equally worth avoiding from my perspective. So I can’t just mind my own business and make sure that I don’t do anything wrong. Instead I must care about what others do too, and how that affects the good from my perspective, meaning I am more receptive to considerations of collective action paradoxes.

On agent-centered deontological theories, on the other hand, the self-defeat looks much less vicious. Consider this variation of the prisoner’s dilemma:

Here, both parties choosing the option that is most in line with the duty not to kill results in more people being killed by both parties. Before we consider the case further, a quick side-note: The hardline deontologist may also have another way out here. After all, we directly control our actions and intentions, even if we don’t directly control the outcomes. We always have the option of holding our breaths until we die, letting us not make a decision. I do think this does let hardline deontology off the hook with regards to self-defeat. The problem with this is that any theory in which this is always an option is just ridiculously implausible for other reasons.

Suppose that you are given two options: Either you intentionally kill a single innocent person, or else literally every living creature is tortured to death and then sent to hell for all eternity (including the person who would have been killed). The hardline deontologist will have to say that you should just do nothing. This is such an implausible consequence that I think we can simply dismiss this view out of hand. But any plausible view that gets this case right, will also be susceptible to a prisoner’s dilemma: we have the decision matrix above, and if you choose nothing literally every living creature is tortured to death and then sent to hell for all eternity (including the people who would have been killed). Such dilemmas will still be very rare then, but the theory will in principle be directly collectively self-defeating.

Back to the case. Not only are more people being killed by people following their duties, but more people are being killed by the people who follow their duties. This may seem bad, but it looks like the deontologist doesn’t have to worry too much: “I did my duty by choosing the option where I would end up killing the least amount of people. I don’t have a duty to stop other people from committing murder, only to not do it myself, so it is of no interest to me whether someone else caused me to kill an extra person—if I had not chosen as I did, I would have killed even more”. The deontologist is under no illusion that their theory will not result in the least murders—if that was what they cared about, they would be a murder-consequentialist. So pointing out that following their duty leads to more people being killed is a moot point.

The difference here is that while the special-obligations utilitarian cares about the outcome that does the most good, the deontologist (qua deontologist) only cares about the type of action itself. This means that taking the action that leads to them killing the least amount of people just is the right thing; it is inconsequential (pun intended) whether that action in fact leads to more people being killed overall. So while the deontological theory is a moral theory, and thus universal in scope, the scope for each individual is no more than their own actions. The theory is in some sense maximally agent-relative in that there is no independent criterion of success other than people taking the right kinds of actions from their own perspectives. With the special-obligations utilitarianism, we can at least say that some choice being taken out there is bad, even if only from the perspective of an agent.

“But”, you retort, “you are still just looking at it from individual perspectives! No-one is claiming that the theories are individually self-defeating. Rather, they are collectively self-defeating, so you have to look at it from a collective standpoint, rather than from individual perspectives.” The problem with this is that there is no collective standpoint. We cannot judge how successfully a deontological theory is being followed from outside individual perspectives, as there are no duties outside these perspectives. We can of course look at the amount of people living up to their duties, but that is not within the scope of the theory; the theory doesn’t tell us anything about people living up to their duties, considered from an agent-neutral perspective. Rather, it tells you about your duties—you, the agent who is having a perspective. So we can never pose these sorts of self-defeat as an internal critique of the theory. The critiques require that we take “the view from nowhere”, as Nagel puts it, but agent-relative views precisely reject that this view captures the whole story, and so the accusations of self-defeat have no traction from within the agent-relative theory.

Again, this response doesn’t work as well for special-obligations utilitarianism, I think. The reason is that right action on that view is supervenient on the outcomes of actions. There is nothing about the action itself that inherently makes it right or wrong apart from the state of the world out there. But this means that we can really say that agents are doing a worse job at achieving their theory-given aims, by achieving their theory-given aims. But on deontology the aim simply is to perform the right action, which everyone is doing.

At the same time, it does in a sense work for both theories, because in raising this critique, we are again looking at the theory from a collective or agent-neutral level. For the theory to be able to fail at a collective level, the theory must say something about the collective level—but it doesn’t! The theory (at least to the extent that it is agent-relative) has no collective aim, it only has agent-relative aims. So there is no way for it to be failing at a collective level, when there is nothing it can be failing at, at the collective level. Special-obligations utilitarianism doesn’t have as a collective aim that everyone promote their agent-relative good to the highest extent—if it did, it would just be regular utilitarianism (or well, it would be regular utilitarianism, but where the utility of people with more family and friends would be more valuable). Likewise, agent-centered deontology does not have as a collective aim that everyone follow their duties to the highest extent—if it did, it would just be duties-consequentialism. So even though these theories are collective in scope, in virtue of being moral theories, they have no collective aims for self-defeat to be frustrating; there is no collective level to judge them on.

But while accusations of self-defeat cannot seem to get traction as internal critiques of agent-relative moral theories, they may still draw our attention to other problematic aspects of these theories.

Is Agent-Relative Morality even Morality?

If you are like me, you probably don’t feel too satisfied with the responses my imagined relativists have given. While they save the theories from being inconsistent, we seem to have pushed them into a corner, making them reveal their true selves. What I mean is that these theories just seem to get morality all wrong. The responses given—especially by agent-centered deontology—seem completely callous. It is as if the proponents are only concerned with not getting their hands dirty. Surely the reason why I shouldn’t kill you has to do with considerations of you. But for the agent-relative theory this is not so. Instead, the reason I shouldn’t kill you has something to do with me and my aims—you are just lucky that my aims happened to align with your interests this time. So watch your back, buddy!

There looks to be a sort of correlation here: The more easily an agent-relative theory can avoid self-defeat, the more it seems to get our moral motivations wrong. Agent-centered deontology isn’t much troubled by self-defeat, but it seems to make complete haywire of what the reasons are for acting morally: We should have literally no regard for other people. Instead, we should care about abstract rules and avoid breaking them so no one can fault us for doing so. It almost gets scarily close to sounding like a psychopath pretending to care about morality. Special-obligations utilitarianism, on the other hand, has a harder time accepting self-defeat. But at the same time, the motivations it gives us seem less problematic: I really should care about other people and their interests. On the other hand, I should value the people that are close to me more than other people, and in this sense it still feels somewhat self-serving.

Agent-neutral theories don’t escape these charges completely either, though. On hedonistic utilitarianism, for example, people seem to be reduced to something like pleasure-receptacles. The reason I helped you out wasn’t because I wanted to help you, silly! It was because I figured that doing so would best promote the aggregate pleasure of the world—you are just lucky that you were the best candidate for having the pleasure this time. I think the only kind of theory that truly avoids this sort of problem is some kind of situation ethics, where you just consider the situation and the people involved in each specific case. Here I can truly say that the reason I helped you out was because I cared about you.

I don’t think this shows us that we should all become situation-ethicists though. There are several desiderata for an ethical theory, and while getting this sort of moral motivation right isn’t the only one, it still is one, it seems. And it is one where agent-relative theories do worse than agent-neutral ones, and they do worse the more agent-relative they are.

Conclusion

So it looks like we didn’t get the satisfying knock-down argument we were looking for; agent-relative theories are not inconsistent, even though they are self-defeating. Rather, the problem has been moved to the arena of inter-theoretical comparison, where it is much more modest. Instead of having a proof against agent-relativity, we have one of several considerations that should have weight when making a decision of which theory to choose, meaning it can in principle be overcome if the reasons in favor of agent-relativity are strong enough. But even if we didn’t get the forceful objection we were looking for, it is also far from a trivial cost to the theories.

Anyone who has read Reasons and Persons will also be feeling some deja-vu at several points in this post.

It seems the Postmodernists might have the best untapped fertile ground for evaluating the Prisoner Dilemma. One such approach would be to think about it like this:

1. The dominant social In-Group, represented by the Police, subscribes to a Moral Theory that is defined by the comparative moral value of Selfishness vs Cooperation

2. Our Prisoners are evidently in jail because their actions indicate they subscribe to an Out-Group Moral Theory (defined by a certain comparison of Selfishness vs Cooperation) that deviates from the In-Group's

3. The Police (or more broadly, the "In-Group Justice System") consider it their job to eliminate deviant Moral Theories through rewards and punishments ("re-education")

4. It is very plausible that, over the years, the Police have experimented with different Dilemmas and have observed the extent to which the responses of the various prisoners indicate improved conformity to the In-Group Moral Theory

5. Therefore, our Prisoner's can probably assume that the particular Dilemma they face is the product of an ongoing evolutionary trial-and-error process that that seeks to maximize conformity to In-Group Morality based upon empirical expectations of prisoner responses

6. In certain well-defined game theoretic models of this, we might make additional simplifying assumptions (that In-Group Morality and Out-Group Morality are well-defined, that the In-Group rigidly maintains its Morality, that the Out-Group adapts its Morality in response to In-Group actions based on In-Group values, that adaptive responses can be modeled, etc)